Intelligence, secured from within.

Assess and secure your Large Language Models and generative AI systems inside-out against prompt injection, testing, and data leakage.

What AI / LLM Penetration Testing covers

Identify vulnerabilities in generative AI endpoints, models, and retrieval-augmented generation (RAG) environments.

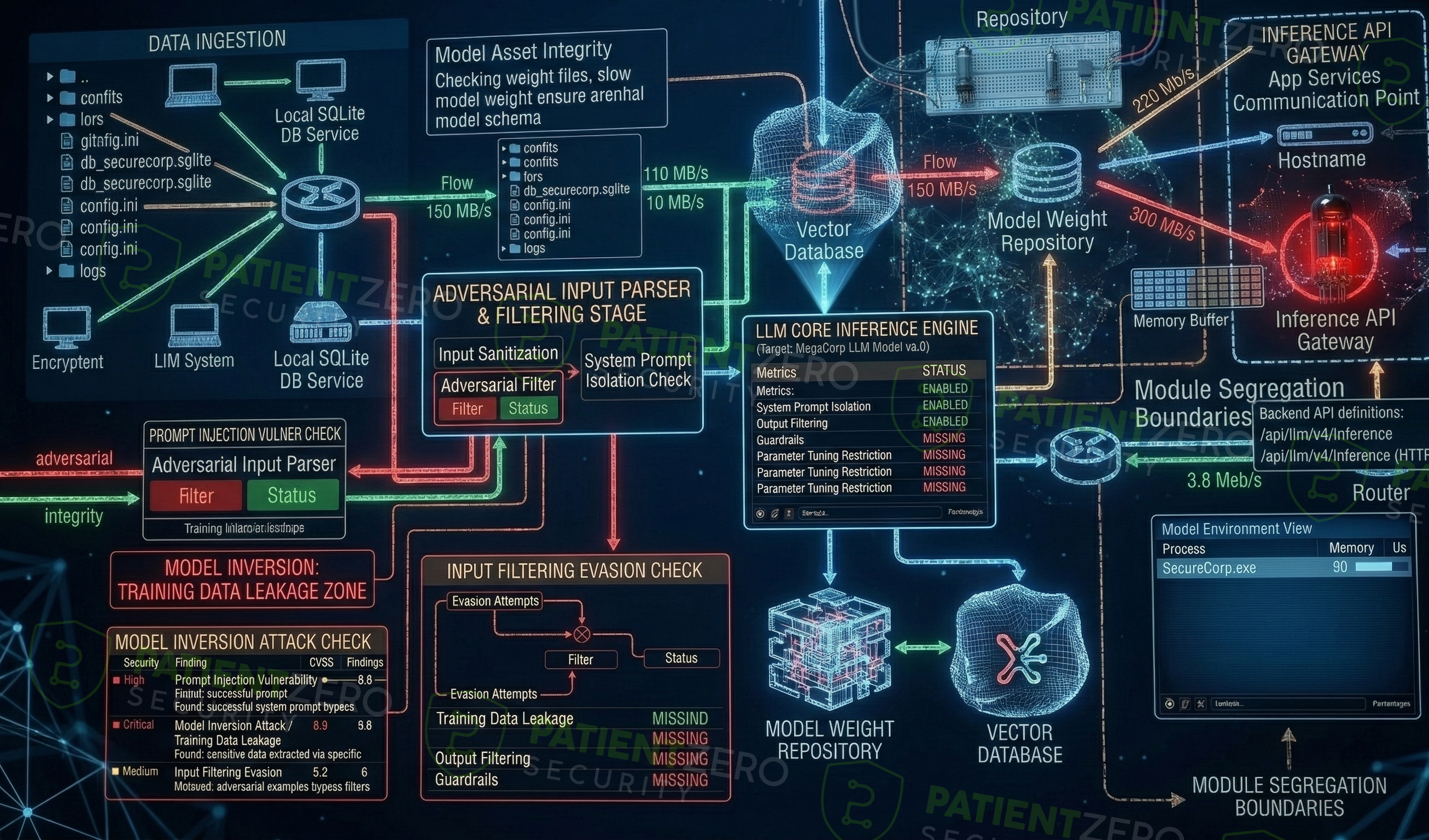

Our AI / LLM Penetration Testing assesses both the application layer and the underlying machine learning logic. We simulate an attacker attempting to bypass your LLM's guardrails, manipulate its decision-making, and extract unauthorized data. If your application relies on Retrieval-Augmented Generation (RAG) to process sensitive domain knowledge, we test whether an attacker could alter the database or trick the model into revealing internal records.

Testing is aligned with the OWASP Top 10 for LLM Applications to ensure systematic coverage of emerging AI vulnerabilities. We go beyond generic prompt injection checklists to test deeply integrated features like tool calling, function execution, and recursive prompt behavior. If your model controls actions (like sending emails or modifying databases), we attempt to hijack that execution flow.

While standard vulnerability scanners fail to understand the contextual logic of LLM interactions, our manual approach leverages both manual crafting and automated adversarial testing. We aim to answer practical questions: Can a user trick the model into ignoring its instructions? Can they extract the system prompt? Or cause a denial of service by overwhelming the context window?

All findings are documented in clear, reproducible reporting designed for both executive review and engineering remediation.

Our AI / LLM penetration testing process

From initial access to the models to assessing the foundational code.

What you take away

Actionable deliverables to guide remediation and understand systemic risk.

Ready to secure your AI systems?

Every engagement includes a formal report and optional live readout call.